Introduction: A Proof of SMW Formula

In the tutorial of MAT2041A Linear Algebra and Applications, I constructed a proof of the Sherman-Morrison-Woodbury Formula to demonstrate the potential of block matrices.

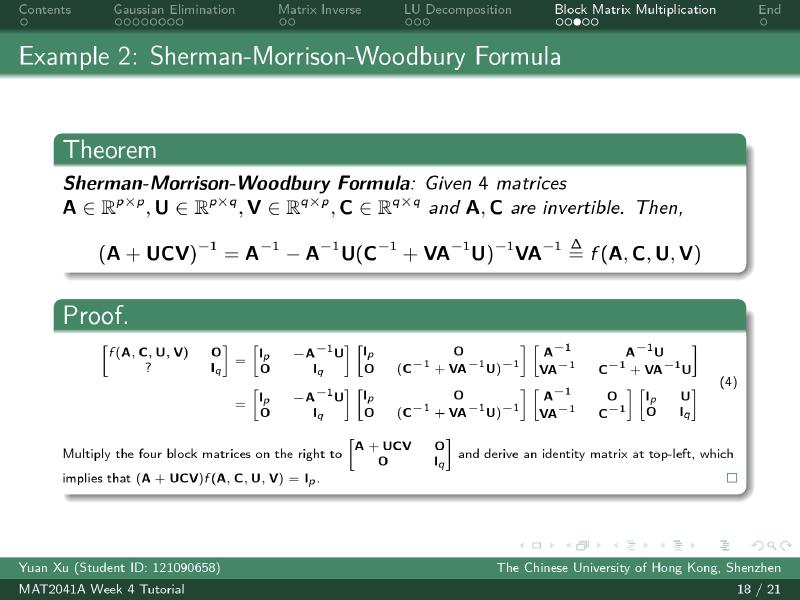

Sherman-Morrison-Woodbury Formula

Sherman-Morrison-Woodbury Formula: Given $4$ matrices $\textbf{A} \in \mathbb{R}^{p\times p},\textbf{U} \in \mathbb{R}^{p\times q},\textbf{V} \in \mathbb{R}^{q\times p},\textbf{C} \in \mathbb{R}^{q\times q}$, where $\textbf{A},\textbf{C}, \begin{bmatrix} \textbf{A} & \textbf{U} \\ \textbf{V} & \textbf{C} \end{bmatrix}$ are invertible. Then,

$$(\textbf{A}+\textbf{U}\textbf{C}\textbf{V})^{-1} = \textbf{A}^{-1} - \textbf{A}^{-1}\textbf{U}(\textbf{C}^{-1}+\textbf{V}\textbf{A}^{-1}\textbf{U})^{-1}\textbf{V}\textbf{A}^{-1} \stackrel{\Delta}{=} f(\textbf{A},\textbf{C},\textbf{U},\textbf{V})$$

Proof

We can observe that

$$\small\begin{aligned}\begin{bmatrix} f(\textbf{A},\textbf{C},\textbf{U},\textbf{V}) & \textbf{O} \\\\ ? & \textbf{I}_q \end{bmatrix} &= \begin{bmatrix} \textbf{I}_p & -\textbf{A}^{-1}\textbf{U} \\\\ \textbf{O} & \textbf{I}_q \end{bmatrix} \begin{bmatrix} \textbf{I}_p & \textbf{O} \\\\ \textbf{O} & (\textbf{C}^{-1}+\textbf{V}\textbf{A}^{-1}\textbf{U})^{-1} \end{bmatrix} \begin{bmatrix} \textbf{A}^{-1} & \textbf{A}^{-1}\textbf{U} \\\\ \textbf{V}\textbf{A}^{-1} & \textbf{C}^{-1}+\textbf{V}\textbf{A}^{-1}\textbf{U} \end{bmatrix} \\\\ &= \begin{bmatrix} \textbf{I}_p & -\textbf{A}^{-1}\textbf{U} \\\\ \textbf{O} & \textbf{I}_q \end{bmatrix} \begin{bmatrix} \textbf{I}_p & \textbf{O} \\\\ \textbf{O} & (\textbf{C}^{-1}+\textbf{V}\textbf{A}^{-1}\textbf{U})^{-1} \end{bmatrix} \begin{bmatrix} \textbf{A}^{-1} & \textbf{O} \\\\ \textbf{V}\textbf{A}^{-1} & \textbf{C}^{-1} \end{bmatrix} \begin{bmatrix} \textbf{I}_p & \textbf{U} \\\\ \textbf{O} & \textbf{I}_q \end{bmatrix}\end{aligned}$$Multiply the four block matrices on the right to $\begin{bmatrix}\textbf{A}+\textbf{U}\textbf{C}\textbf{V} & \textbf{O} \\ \textbf{O} & \textbf{I}_q\end{bmatrix}$ and derive an identity matrix at top-left, which implies that $(\textbf{A}+\textbf{U}\textbf{C}\textbf{V})f(\textbf{A},\textbf{C},\textbf{U},\textbf{V}) = \textbf{I}_p$.

$\square$

Computation

Let $\textbf{D} \stackrel{\Delta}{=} \textbf{C}^{-1}+\textbf{V}\textbf{A}^{-1}\textbf{U}$, then

$$\small\begin{aligned} &\begin{bmatrix} \textbf{A} + \textbf{U}\textbf{C}\textbf{V} & \textbf{O} \\\\ \textbf{O} & \textbf{I} \end{bmatrix} \begin{bmatrix} \textbf{I}_p & -\textbf{A}^{-1}\textbf{U} \\\\ \textbf{O} & \textbf{I}_q\end{bmatrix} \begin{bmatrix} \textbf{I}_p & \textbf{O} \\\\ \textbf{O} & (\textbf{C}^{-1}+\textbf{V}\textbf{A}^{-1}\textbf{U})^{-1} \end{bmatrix} \begin{bmatrix} \textbf{A}^{-1} & \textbf{O} \\\\ \textbf{V}\textbf{A}^{-1} & \textbf{C}^{-1} \end{bmatrix} \begin{bmatrix} \textbf{I}_p & \textbf{U} \\\\ \textbf{O} & \textbf{I}_q \end{bmatrix} \\\\ =& \begin{bmatrix}\textbf{A}+\textbf{U}\textbf{C}\textbf{V} & -\textbf{U}-\textbf{U}\textbf{C}\textbf{V}\textbf{A}^{-1}\textbf{U} \\\\ \textbf{O} & \textbf{I}_q\end{bmatrix} \begin{bmatrix}\textbf{I}_p & \textbf{O} \\\\ \textbf{O} & \textbf{D}^{-1} \end{bmatrix} \begin{bmatrix} \textbf{A}^{-1} & \textbf{O} \\\\ \textbf{V}\textbf{A}^{-1} & \textbf{C}^{-1} \end{bmatrix} \begin{bmatrix} \textbf{I}_p & \textbf{U} \\\\ \textbf{O} & \textbf{I}_q \end{bmatrix} \\\\ =& \begin{bmatrix} \textbf{A}+\textbf{U}\textbf{C}\textbf{V} & -\textbf{U}\textbf{C}\textbf{D}\\\\ \textbf{O} & \textbf{I}_q \end{bmatrix} \begin{bmatrix} \textbf{I}_p & \textbf{O} \\\\ \textbf{O} & \textbf{D}^{-1} \end{bmatrix} \begin{bmatrix} \textbf{A}^{-1} & \textbf{O} \\\\ \textbf{V}\textbf{A}^{-1} & \textbf{C}^{-1} \end{bmatrix} \begin{bmatrix} \textbf{I}_p & \textbf{U} \\\\ \textbf{O} & \textbf{I}_q \end{bmatrix} \\\\ =& \begin{bmatrix} \textbf{A}+\textbf{U}\textbf{C}\textbf{V} & -\textbf{U}\textbf{C} \\\\ \textbf{O} & \textbf{D}^{-1} \end{bmatrix} \begin{bmatrix} \textbf{A}^{-1} & \textbf{O} \\\\ \textbf{V}\textbf{A}^{-1} & \textbf{C}^{-1} \end{bmatrix} \begin{bmatrix} \textbf{I}_p & \textbf{U} \\\\ \textbf{O} & \textbf{I}_q \end{bmatrix} \\\\ =& \begin{bmatrix} \textbf{I}_p & -\textbf{U} \\\\ ? & ? \end{bmatrix} \begin{bmatrix} \textbf{I}_p & \textbf{U} \\\\\textbf{O} & \textbf{I}_q\end{bmatrix} = \begin{bmatrix} \textbf{I}_p & \textbf{O} \\\\? & ?\end{bmatrix} \\\\ \Rightarrow & (\textbf{A}+\textbf{U}\textbf{C}\textbf{V})f(\textbf{A},\textbf{C},\textbf{U},\textbf{V}) = \textbf{I}_p \end{aligned}$$Idea: Punching Hole Technique

The “observed” block matrix multiplication actually creates identity matrices and zero matrices as sub-matrices. The related technique introduced by Hua Loo-Keng is called “Punching Hole” Principle (打洞原理 in Chinese) where the zero matrices are the holes in block matrices.

Rank of Block Matrices

Guttman rank additivity formula: If a block matrix $\mathbf{P}$ of $2\times 2$ sub-matrices with left-top sub-matrix $\mathbf{A}$ nonsingular, then

$$\small\begin{aligned} >\text{rank}(\mathbf{P}) = \text{rank}(\begin{bmatrix} >\mathbf{A} & \mathbf{B} \\\\ >\mathbf{C} & \mathbf{D} >\end{bmatrix}) = \text{rank}(\mathbf{A}) + \text{rank}(\mathbf{D} - \mathbf{C}\mathbf{A}^{-1}\mathbf{B}) >\end{aligned}$$where $\mathbf{D} - \mathbf{C}\mathbf{A}^{-1}\mathbf{B}$ is $\mathbf{A}$’s Schur Complement, written as $\mathbf{P} / \mathbf{A}$.

Proof:

$$\small\begin{aligned} \text{rank}(\begin{bmatrix} \mathbf{A} & \mathbf{B} \\\\ \mathbf{C} & \mathbf{D} \end{bmatrix}) &= \text{rank}(\begin{bmatrix} \mathbf{I} & \mathbf{O} \\\\ -\mathbf{C}\mathbf{A}^{-1} & \mathbf{I} \end{bmatrix} \begin{bmatrix} \mathbf{A} & \mathbf{B} \\\\ \mathbf{C} & \mathbf{D} \end{bmatrix} \begin{bmatrix} \mathbf{I} & -\mathbf{A}^{-1}\mathbf{B} \\\\ \mathbf{O} & \mathbf{I} \end{bmatrix}) \\\\ &= \text{rank}(\begin{bmatrix} \mathbf{A} & \mathbf{B} \\\\ \mathbf{O} & \mathbf{D} - \mathbf{C}\mathbf{A}^{-1}\mathbf{B} \end{bmatrix} \begin{bmatrix} \mathbf{I} & -\mathbf{A}^{-1}\mathbf{B} \\\\ \mathbf{O} & \mathbf{I} \end{bmatrix}) \\\\ &= \text{rank}(\begin{bmatrix} \mathbf{A} & \mathbf{O} \\\\ \mathbf{O} & \mathbf{D} - \mathbf{C}\mathbf{A}^{-1}\mathbf{B} \end{bmatrix}) = \text{rank}(\mathbf{A}) + \text{rank}(\mathbf{D} - \mathbf{C}\mathbf{A}^{-1}\mathbf{B}) \end{aligned}$$$\square$

Determinant of Block Matrices

Extension: Block Matrix Rank Inequality

Using the Punching Hole Technique above, we can derive the following rank inequalities.

Sylvester Inequality

Sylvester Inequality: For any matrices $\mathbf{A} \in \mathbb{R}^{m\times n}, \mathbf{B} \in \mathbb{R}^{n\times k}$, then

$$\text{rank}(\mathbf{AB}) \ge \text{rank}(\mathbf{A}) + \text{rank}(\mathbf{B}) - n$$

Proof: By Guttman rank additivity formula with $\mathbf{A} = \mathbf{I}_n$, then

$$\small \begin{aligned}\text{rank}(\begin{bmatrix} \mathbf{I}_n & \mathbf{B} \\\\ \mathbf{A} & \mathbf{O}_{m\times k}\end{bmatrix}) &= \text{rank}(\begin{bmatrix} \mathbf{I}_{n} & \mathbf{O} \\\\ -\mathbf{A} & \mathbf{I}_{m}\end{bmatrix} \begin{bmatrix} \mathbf{I}_n & \mathbf{B} \\\\ \mathbf{A} & \mathbf{O}_{m\times k}\end{bmatrix} \begin{bmatrix} \mathbf{I}_{n} & -\mathbf{B} \\\\ \mathbf{O} & \mathbf{I}_{k}\end{bmatrix}) \\\\ &= \text{rank}(\begin{bmatrix} \mathbf{I} & \mathbf{O}_{n\times k} \\\\ \mathbf{O}_{m\times n} & -\mathbf{AB} \end{bmatrix}) = \text{rank}(\mathbf{I}_n) + \text{rank}(\mathbf{AB}) = n + \text{rank}(\mathbf{AB}) \end{aligned}$$Therefore,

$$\text{rank}(\mathbf{AB}) = \text{rank}(\begin{bmatrix} \mathbf{I}_n & \mathbf{B} \\\\ \mathbf{A} & \mathbf{O}_{m\times k}\end{bmatrix}) - n \ge \text{rank}(\mathbf{A}) + \text{rank}(\mathbf{B}) - n$$$\square$

Frobenius Inequality

Frobenius Inequality: For any matrices $\mathbf{A} \in \mathbb{R}^{m\times n}, \mathbf{B} \in \mathbb{R}^{n\times k}, \mathbf{C} \in \mathbb{R}^{k\times l}$, then

$$\text{rank}(\mathbf{AB}) + \text{rank}(\mathbf{BC}) \le \text{rank}(\mathbf{ABC}) + \text{rank}(\mathbf{B})$$

Proof: Similar with Guttman rank additivity formula (but note that we don’t have inverse matrix of $\mathbf{A}$),

$$\small \begin{aligned}\text{rank}(\begin{bmatrix} \mathbf{B} & \mathbf{BC} \\\\ \mathbf{AB} & \mathbf{O}_{m\times l}\end{bmatrix}) &= \text{rank}(\begin{bmatrix} \mathbf{I}_{n} & \mathbf{O} \\\\ -\mathbf{A} & \mathbf{I}_{m}\end{bmatrix}\begin{bmatrix} \mathbf{B} & \mathbf{BC} \\\\ \mathbf{AB} & \mathbf{O}_{m\times l}\end{bmatrix} \begin{bmatrix} \mathbf{I}_{k} & -\mathbf{C} \\\\ \mathbf{O} & \mathbf{I}_{l}\end{bmatrix}) = \text{rank}(\begin{bmatrix} \mathbf{B} & \mathbf{O}_{n\times l} \\\\ \mathbf{O}_{m\times k} & -\mathbf{ABC} \end{bmatrix}) = \text{rank}(\mathbf{B}) + \text{rank}(\mathbf{ABC}) \end{aligned}$$Therefore,

$$\text{rank}(\mathbf{AB}) + \text{rank}(\mathbf{BC}) = \text{rank}(\begin{bmatrix} \mathbf{O}_{n\times k} & \mathbf{BC} \\\\ \mathbf{AB} & \mathbf{O}_{m\times l}\end{bmatrix}) \le \text{rank}(\begin{bmatrix} \mathbf{B} & \mathbf{BC} \\\\ \mathbf{AB} & \mathbf{O}_{m\times l}\end{bmatrix}) = \text{rank}(\mathbf{ABC}) + \text{rank}(\mathbf{B})$$$\square$